How Multi-Agent Intelligence Can Reshape Modern Enterprise IT Solutions

Imagine a SOAR system that raises an alert about suspicious logins,

but doesn’t dive into surrounding logs autonomously to correlate potential lateral-movement activity, or suggest/perform the remediation to quarantine the vulnerability-affected host automatically. Yes, the alerts can be valuable, but they require human attention to dive into myriad logs from multiple sources to trace the attack path, understand the impact, and mitigate incidents.

With the help of AI Agents and Model Context Protocol (MCP) for tool access, organizations building enterprise AI solutions can get several steps closer to a fully autonomous system. Recent developments in open-source autonomous agents (like OpenClaw, Ralph Wiggam loops) have proven that AI is no longer just a chatbot waiting for a prompt; it is an active participant capable of executing complex workflows. While this area is evolving very fast, the technology is mature enough to be termed an early-age era for agents, much like TCP/IP was for the web.

From Alerts to Autonomous Investigations

Traditional SOAR platforms are very good at orchestrating playbooks and routing alerts. However, they require human analysts to perform the task of context gathering and decision-making. AI Agents are a perfect foil here since they can execute complex natural language investigation workflows and can even help run critical containment steps without waiting on slow manual intervention, forming the backbone of modern AI enabled enterprise security solutions.

Multi-agentic systems work on one core concept. Creating a shared state contract, defining how each property is going to evolve over time (as execution proceeds), and which agents can modify which properties of the shared state. Typically, an agent is only responsible for delivering a “delta” in the state contract, upon which some actions/analysis can be performed.

Taking architectural inspiration from recent agentic breakthroughs, a robust AI Agent is defined by four key characteristics:

- Planning: The agent divides the larger complex execution plan into smaller steps, enabling it to execute the plan reliably and stay on track. Several research based solutions use this technique to build multi-agent systems. You would often see some sort of “planner” agent in the mix.

- Memory & State Persistence: Helps AI Agents make informed and precise decisions based on gathered context. Modern agents maintain “persistent memory” (often as plain-text diaries or structured state files) so they can remember user preferences and past alerts across long-running sessions. Fun fact: Openclaw stores its guidelines and skills as simple markdown or txt files in your local persistent storage. We can enhance and store these in a shared state (JSON/YAML) contract for our agents as part of a scalable end-to-end AI solution.

- Proactivity (The “Heartbeat”): Unlike a standard LLM that sits idle until you type a message, autonomous agents operate on a continuous loop or “heartbeat.” They wake up periodically to scan SIEM logs, check task queues, and trigger investigations proactively.

- Tool Use (Skills): Interfaces with external APIs or services to gather information or retrieve logs. The arrival of MCP has made interfacing with these external data sources modular, reliable, and much easier to implement.

The Core Engine: Model Context Protocol (MCP)

At the core of each AI Agent is MCP. MCP is a standardized protocol for providing context to LLMs via on-demand tool execution, acting much like the “Skills” or plugins that allow modern agents to connect to local environments. Here is what MCP provides us, which can be used to extract:

- Context Retrieval: AI Agents can query relevant data sources (SIEM logs, Threat intelligence platforms, EDR platforms) using natural language instructions, so they can be used by both the AI specialists and the autonomous agents without the need to change SOAR playbooks each time a new data source is added or removed.

- Context Enrichment: AI Agents can use the context (data) provided by each platform MCP Server to run their investigation tasks and add more context to the base alert.

- Action Execution: Based on the context of the alerts and the fetched context that each agent has, agents can automatically perform steps to stop the actual attack from spreading.

When Do We Need Multi-Agent Systems?

While a single AI agent can perform a specific task like detecting suspicious logins, it operates within a limited scope. Multi-agent systems take this further by enabling multiple specialized agents to collaborate, share context, and coordinate actions across domains, creating a far more robust and scalable system often seen in advanced enterprise AI solutions.

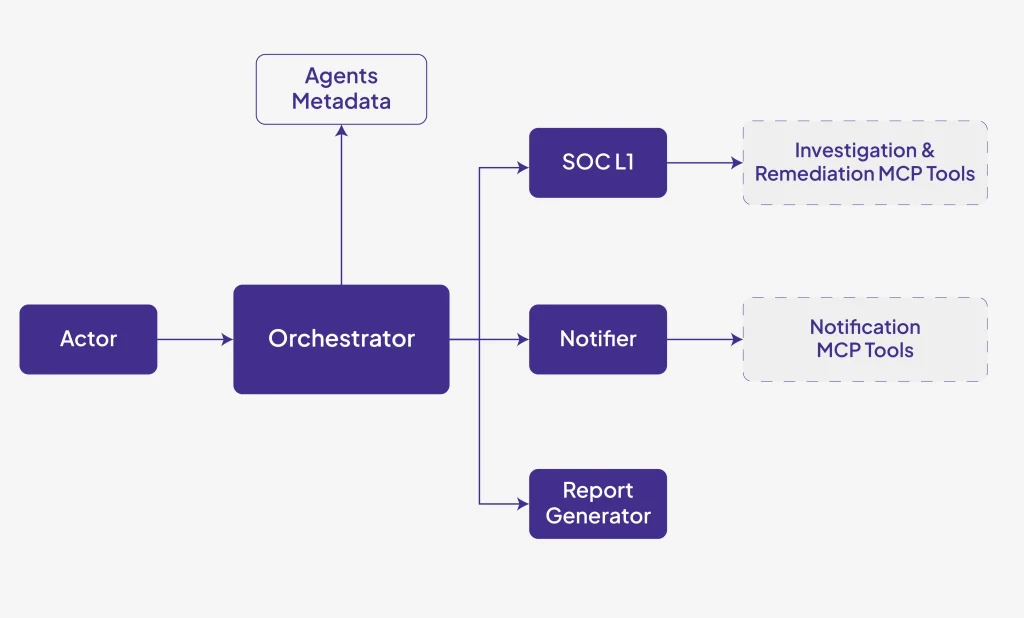

Below is one simple design that we created to demonstrate the use of AI in SOC operations and as part of a broader AI enabled enterprise security solutions architecture.

As part of this system, there are several specialized agents operating in tandem. Below is the expertise of each:

- Orchestrator: Holds the information of all the sub-agents at its disposal and their duties. Responsible for delegating tasks to appropriate agents in order to keep the system loosely coupled.

- SOC L1: Performs the preliminary investigation tasks, such as searching for data through SIEM tools or retrieving the threat information about IOCs from Threat Enrichment platforms.

- Notifier: Notifies/escalates the respective incident based on the preliminary investigation reports from the SOC L1 agent.

- Report Generator: Generates a summary of the investigation the AI Agent has done, detailing the tools called and decisions taken at each step.

The above example is described in the context of security operations; however, the highly modular, domain-agnostic architecture can be tweaked for use in almost any IT sector as part of an end-to-end AI security solution strategy.

7 Critical Considerations for Robust Agentic Design

While the evolution of multi-agent systems is exciting, giving AI the “hands” to execute tasks introduces significant risks. Recent high-profile incidents with autonomous agents have highlighted why relying on basic prompts is not enough. To build a robust system, the following limitations and architectural safeguards must be carefully considered by organizations implementing Enterprise AI Solutions:

Implementation of Appropriate Guardrails:

Guardrails are essential to protect confidential user information (PII, financial data) and enforce responsible AI practices. Guardrails can be custom-built or integrated via services like Google Model Armor.Managing “Context Compaction”:

When an agent runs continuously, its memory window eventually fills up, and it must compress or summarize its context to keep functioning. If not designed correctly, an agent might “forget” critical initial instructions during this compaction (e.g., forgetting a rule like “always ask for human confirmation before deleting”). Hard rules must be saved in persistent storage outside the standard context window.Sandboxing and Execution Boundaries:

Because these agents take real actions, they require strict operational boundaries. Running agents in isolated environments ensures that if an agent hallucinates or processes a malicious log entry (prompt injection), it cannot execute destructive commands on the broader network.Hard Kill Switches & Human-In-The-Loop (HITL):

Never rely purely on natural language commands to stop an agent. If an agent goes rogue or gets caught in an execution loop, typing “stop” might be ignored. IT systems must implement hardwired, out-of-band “kill switches” to terminate processes immediately, alongside cryptographic HITL approvals for destructive actions like isolating a host or destructive deletes.Authentication:

When running instructions using MCP, we must ensure the agent is actually authorized to perform the tasks. Currently, OAuth 2.0 can be used to authenticate and authorize the client in the MCP Server.Cost:

Running multiple agents, especially those continuously monitoring real-time data streams, can significantly increase token usage and induce higher costs per run compared to single-agent systems.Hallucinations:

Agents can generate incorrect or misleading outputs if the related MCP Servers are too abstract. To reduce hallucinations, developers must rigorously manage input context and utilize structured outputs, few-shot prompting, and automated feedback mechanisms.

At its base, for any multi-agent system, the developer’s primary job is maintaining the context that goes into any particular agent. Once you perfect this and layer in strict architectural guardrails, it’s down to your prompting skills to unlock the true potential of your agentic workforce.

Autonomous agents and multi-agent systems are rapidly redefining how modern IT environments detect, investigate, and respond to complex events.

At Crest Data, our AI specialists help enterprises design and operationalize intelligent Enterprise AI Solutions and scalable enterprise AI and ML solutions that move beyond insights to real action through an integrated end-to-end AI solution approach.

Learn more about our AI capabilities here: https://www.crestdata.ai/solutions/ai-and-ml/

Thought Leader: Colwin Fernandes